.png)

Data Talks on the Rocks 1 - Modal Labs, Pinecone, Ask-Y

Data Talks on the Rocks is a series of interviews from thought leaders and founders discussing the latest trends in data and analytics.

Data Talks on the Rocks 2 features:

- Guillermo Rauch, founder & CEO of Vercel

- Ryan Blue, founder & CEO of Tabular which was recently acquired by Databricks

Data Talks on the Rocks 3 features:

- Lloyd Tabb, creator of Malloy, and the former founder of Looker

Data Talks on the Rocks 4 features:

- Alexey Milovidov, co-founder & CTO of ClickHouse

Data Talks on the Rocks 5 features:

- Hannes Mühleisen, creator of DuckDB

Data Talks on the Rocks 6 features:

- Simon Späti, technical author & data engineer

Data Talks on the Rocks 7 features:

- Kishore Gopalakrishna, co-founder & CEO of StarTree

Data Talks on the Rocks 8 features:

- Toby Mao, founder of Tobiko (creators of SQLMesh and SQLGlot)

- Jordan Tigani, co-founder of MotherDuck

- Yury Izrailevsky, co-founder of ClickHouse

- Kishore Gopalakrishna, founder of StarTree

Data Talks on the Rocks 8 features:

- Joe Reis, author

Data Talks on the Rocks 9 features:

- Matthaus Krzykowski, co-founder & CEO of dltHub

Data Talks on the Rocks 10 features:

- Wes McKinney, creator of Pandas & Arrow

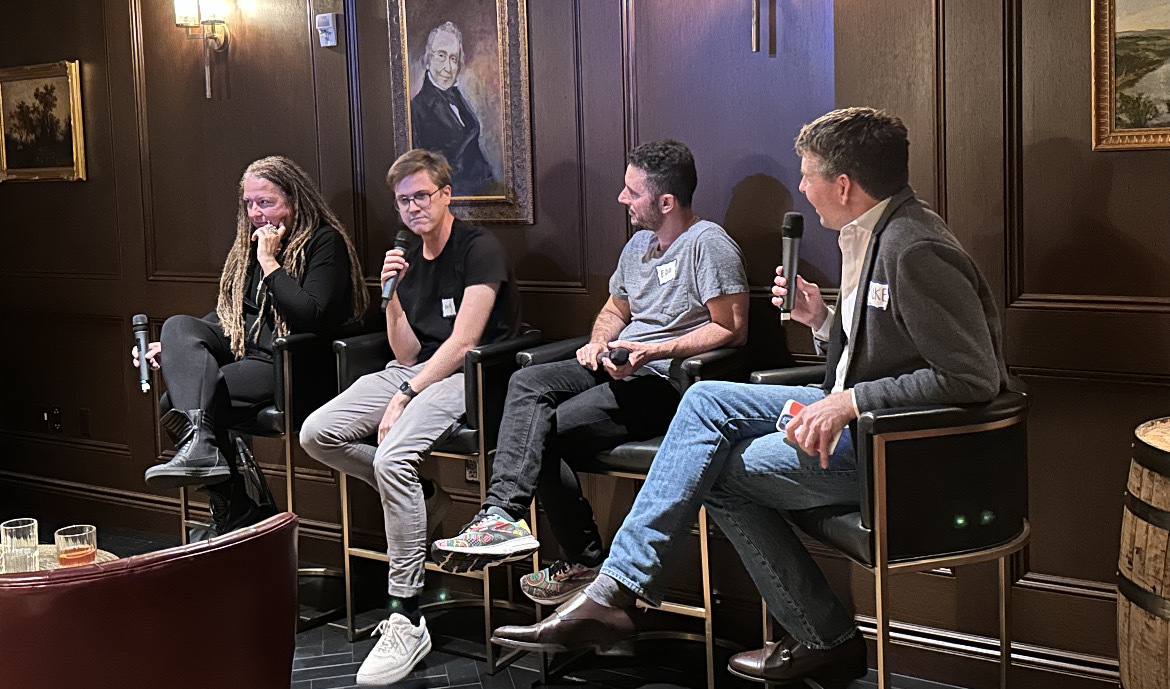

Two weeks ago I was lucky enough to bring together a few of NYC’s data, analytics, and AI luminaries for a discussion in a unique backdrop of Manhattan’s oldest distillery. Our esteemed panelists included:

- Edo Liberty is the founder & CEO of Pinecone, the leading vector-database-as-a-service offering (which has raised a total of $100mm), and a former professor of computer science. When not working, coding, or teaching, Edo is an avid surfer.

- Erik Bernhardsson is the founder & CEO of Modal Labs (which announced its $16mm Series A this week), a service which simplifies how developers scale workloads in the cloud. Fun fact, Erik has never owned a TV or a car.

- Katrin Ribant is the founder & CEO of Ask-Y, and the former founder of Dataroma, which sold to Salesforce for nearly $1B. When not building start-ups, Katrin can be found kite surfing in the Caribbean or Greece.

The full transcript of the talk can be found here and the video interview here.

At a recent Data Talks on the Rocks in New York City, Rill’s Michael Driscoll sat down with three founders building in the thick of AI, analytics, and infrastructure: Edo Liberty of Pinecone, Erik Bernhardsson of Modal, and Katrin Ribant of Ask-Y. The conversation covered moats, open source, product strategy, and what AI is actually good for — beyond the pitch deck.

There is no shortage of noise in data and AI right now.

Every week seems to bring a new framework, a new “category-defining” startup, a new infrastructure layer, and a new claim that AI will rewrite how every company builds software. The landscape is crowded, the terminology is overloaded, and the pressure to have an “AI strategy” has become nearly universal.

But beneath the noise, a more interesting question remains: what are serious founders actually building, and why?

What followed was not a generic AI panel. It was a grounded conversation about hard technical problems, changing business models, developer experience, and the uncomfortable difference between real product value and market-driven hype.

The best founders are still chasing hard proble

One of the clearest themes from the discussion was that enduring companies are usually built around problems that remain stubbornly unsolved.

For Katrin Ribant, that problem is not abstract. It is deeply specific: ETL for non-technical users.

She described it as her “white whale” — a problem she expects to chase for a long time, perhaps forever. That framing matters. In a market that often rewards breadth, Ribant made the case for obsession. Her view is that many business users still cannot get data in the form, speed, or shape they need to make decisions. For all the progress in modern data tooling, that gap remains real.

That conviction is what led her to start Ask-Y.

It is a useful reminder that new startups do not need to exist because a market is empty. Sometimes they exist because a market is crowded and still not solving the right problem.

Bernhardsson told a parallel story from a different layer of the stack. Modal began with a broad infrastructure thesis: data and ML teams still struggled with basic cloud deployment, scaling, and scheduling, despite the explosion of tooling around them. The company initially went after that foundational layer, only to discover that the market was not yet pulling hard enough.

Then generative AI arrived.

Suddenly, Modal’s primitives — especially around serverless compute and GPU support — were directly useful for a new class of workloads. Rather than abandoning the original vision, the shift sharpened it. Modal found traction by helping users deploy and scale demanding workloads without forcing them to think about infrastructure.

Liberty’s view from Pinecone was similar in spirit. Vector search, he argued, was always going to become more important as models became more common and more applications needed to work with unstructured and high-dimensional data. The challenge was never whether the problem was real. The challenge was whether someone could solve it well enough, at scale, under real-world constraints.

In other words: the opportunity was not novelty. It was execution.

In infrastructure, the moat is still the hard work

When Driscoll asked Liberty the obvious question — how Pinecone defends itself against hyperscalers, databases adding vector support, and a growing number of competitors — the answer was refreshingly unmagical.

You build a moat by doing the hard work for years.

Not by waving at a category. Not by winning a short-term narrative battle. And not by assuming big companies can out-execute specialists simply because they have more headcount.

Liberty described Pinecone’s advantage as the result of deep technical focus: researchers, engineers, and systems specialists working relentlessly on performance, scale, and reliability. Database infrastructure is not easy to copy at the level that matters most to users. The details compound. Milliseconds compound. Trust compounds.

That last point came through especially clearly when he described Pinecone’s response to a free-tier data deletion incident. Even without contractual obligations to those users, the team treated recovery and transparency as essential. In infrastructure, trust is not a marketing concept. It is part of the product.

That idea applies far beyond vector databases.

The panel repeatedly returned to a distinction that matters in today’s market: there is a difference between adding a capability and being the company users trust for that capability. In crowded categories, the latter is much harder to earn.

Open source is no longer the default answer

A second major theme was the changing role of open source in data infrastructure.

All three founders sit adjacent to open-source ecosystems. Bernhardsson created widely used open-source tools like Luigi and Annoy. Pinecone and Modal both benefit from developer ecosystems shaped by open-source models and infrastructure. And yet none of the companies represented on stage are built around a classic open-core model.

That is not hypocrisy. It is a reflection of how software distribution has changed.

Bernhardsson argued that open source made more strategic sense in a world where users expected to deploy software in their own environments. Today, much of infrastructure is consumed as a managed cloud service. That has changed the calculus. Companies are more comfortable running critical workloads in third-party environments than they were a decade ago, and cloud-native infrastructure businesses have shown that closed-source services can still win developer trust.

Liberty made a related point from the Pinecone side: if the goal is to give developers something easy, accessible, and useful, managed services are often the better vehicle. Developers do not necessarily want the burden of provisioning clusters, configuring Kubernetes, or operating complex systems themselves. They want to get started quickly and build.

That does not mean open source is dead. Ribant suggested there may be places where open source makes sense in generative AI-driven ETL, especially when community participation could strengthen a narrowly defined component. But she also noted something many founders learn the hard way: open source is not a decision you bolt on later. It tends to work best when it is part of the original strategic design.

The takeaway is not that one model is right and the other is wrong. It is that founders need to be honest about what they are optimizing for: distribution, developer love, community contribution, control, or commercial durability.

The real AI opportunity is not “AI everywhere”

Perhaps the most valuable part of the conversation was the most skeptical.

All three founders are building in markets being reshaped by AI. None of them sounded eager to sprinkle AI on everything.

Ribant offered one of the sharpest formulations of the night: AI is particularly powerful for disambiguation. When workflows contain ambiguous, incomplete, or messy inputs, and enough context exists to resolve them intelligently, AI can be transformative. But that does not mean it belongs everywhere. It remains expensive, awkward in some workflows, and poorly suited to certain high-volume or tightly constrained tasks.

Bernhardsson pushed the point even harder. He said he dislikes the framing of “what’s your AI strategy?” because it encourages teams to start with the technology instead of the user problem. His example from Spotify was instructive: machine learning mattered there because the team began with a concrete question — how to help people discover music. The models followed the problem, not the other way around.

That is the opposite of much of today’s market behavior.

Too many organizations, he argued, are still trying to throw “magic AI dust” over weak products or broken workflows. That may satisfy investors or analysts in the short term, but it rarely leads to good software.

Liberty, by contrast, was emphatic about how AI tools are already changing internal engineering workflows. At Pinecone, the use of coding copilots was not just tolerated but mandated for evaluation, and most engineers found real value in them. In that sense, AI is already here: not as AGI, but as a practical accelerator in software development.

At the product layer, Liberty described a broader specialization underway across AI systems. Models are no longer the whole story. Retrieval systems, long-term memory layers, vector databases, hardware specialization, and orchestration all play increasingly distinct roles. The future will not be one giant monolith. It will be a stack of specialized components that work together.

That is a more useful picture of the AI era than the generic idea of “one model to rule them all.”

Hype is real. So is the shift underneath it.

The panel did not dismiss the hype cycle. If anything, it named it directly.

Ribant joked that any startup fundraising today had better mention AI somewhere in the pitch. Bernhardsson noted that public companies are under similar pressure from analysts and shareholders. Everyone is expected to say something.

But the discussion also pointed to something more durable beneath the hype: a real architectural and workflow shift is underway.

AI is changing how software gets written. It is changing the kinds of infrastructure developers need. It is changing expectations around interfaces, ambiguity, search, and retrieval. It is creating new pressure on cloud platforms and new opportunities for specialized infrastructure. And it is reviving old questions in analytics about how technical systems can better serve non-technical decision-makers.

The winners in this cycle are unlikely to be the companies that talk the most about AI.

They are more likely to be the companies that understand where AI genuinely changes the user experience, where it needs support from adjacent systems, and where the hard engineering still matters more than the slogan.

What founders should take away

If there was a shared philosophy across the panel, it might be this:

Start with the real problem. Build where the pain is deep. Don’t confuse market excitement with product truth. And if you choose a hard technical category, be prepared to earn trust the slow way.

That applies whether you are building analytics software for business users, GPU infrastructure for model deployment, or databases for retrieval-heavy AI applications.

It also applies well beyond AI.

The most interesting companies in data have always been built by people willing to stay with a hard problem longer than the market thinks is reasonable. What is changing now is not that principle. It is the toolkit available to those builders — and the speed at which new abstractions are entering the stack.

The noise will continue. So will the logo landscapes.

But the builders who matter are still doing what they have always done: picking the hard thing, staying close to users, and grinding toward something that actually works.

Ready for faster dashboards?

Try for free today.

.svg)

.jpg)