Feed the beast: Building a data stack that agents love

Agent analysts demands new architectures focused on semantics, speed, and scale.

For most of analytics history, the primary source of analytical workloads have been human users. Click a filter, wait for a chart to update, and move on. Concurrency and query volumes were bounded by human attention spans and reflexes.

Agents have no such bounds. Agent-generated analytical workloads – whether accessing KPIs, investigating anomalies, or forecasting trends — generate query patterns that are far wider and deeper than a human’s. Where an analyst might issue a hundred queries in an hour through a UX, an agent investigating a single question can fire off thousands in a single minute, spawning multiple branches of inquiry in parallel based on what it finds.

Your data warehouse bill will increasingly be shaped by agent query patterns, not human ones. Agentic analytics spikes query volumes and makes query traffic towards more complex. Without a highly efficient analytics stack, cost and latency will become the bottlenecks for AI-driven insights.

At Rill, we’ve been building for high-concurrency, high-volume analytics workloads from the start, serving thousands of users exploring billions of events in our cloud application daily. Making this fast and efficient has never been just a database problem. It requires optimization at every layer of the stack, and those layers must work together tightly.

Fast analytics at scale is a full-stack problem

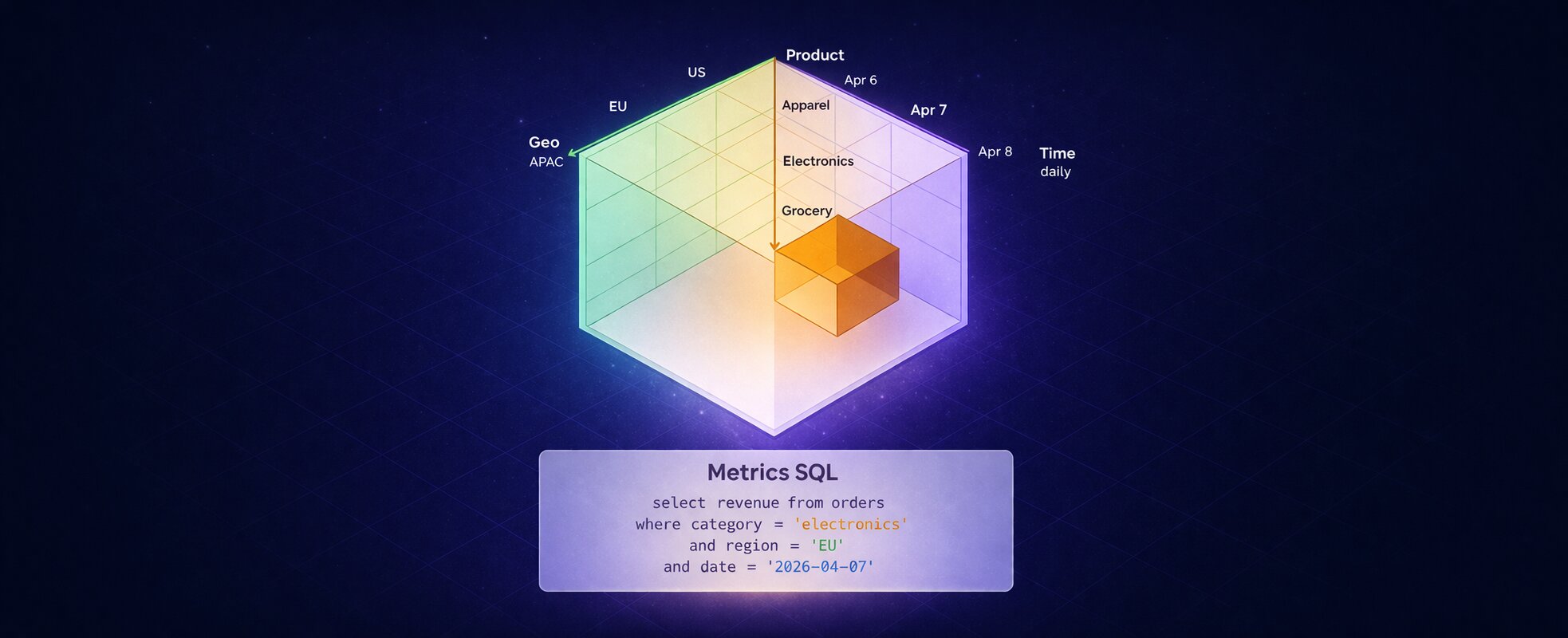

A modern analytical application has three distinct layers, and each has its own optimization surface:

- AI agents, users, and applications — the consumers. Optimizations here are about query intent: expressing what you need precisely so layers below can serve it efficiently.

- Semantic layer — translates intent into physical queries. This is where you enforce correctness (filter requirements, grain compatibility), performance (rollup routing, query rewrites, cancellation), and security (predicate injection). It's the only layer with full visibility into both what the consumer asked for and what the database actually contains.

- Database engine — execution and storage. Column encoding, index structures, projections, and compute isolation. The engine can only work with what's on disk; the data model's physical layout determines how much of data one has to scan to process a query.

No layer compensates for failures in another. A well-designed semantic layer routing to an unsorted table will still scan more data than necessary. A perfectly partitioned table served by a semantic layer that generates full table scans will result in query timeouts.

In this post, I’ll focus on the semantic layer — because in agentic analytics it’s the only layer that can simultaneously understand user intent, data topology, and execution cost. If you don’t enforce discipline here, nothing below can compensate.

Using the semantic layer to optimize query planning

The semantic layer sits between your consumers and your physical data. Every bad decision at this layer multiplies across every query downstream query.

Human analysts develop intuition. They learn which tables are expensive, when to query a rollup table, that an unfiltered query on 50 billion rows will blow past a timeout. They build habits over time through feedback — slow queries and billing alerts are visceral teachers. An AI agent doesn’t have this intuition. It generates SQL from intent, fires queries without understanding your physical topology, and retries on timeout — often making the problem worse.

The semantic layer is what closes this gap. Rill's semantic layer includes many such optimizations; this post covers the most impactful ones. Each targets a failure mode unique to agent-scale workloads.

Lazy loading: only fetch what you need and when you need

We call our core semantic-layer principle WYSIWYF — What You See Is What You Fetch. It means one thing: don't execute a query until the exact dimensions, measures, time range, and filters are known.

In traditional BI, pre-fetching broad result sets and filtering in the client was a common practice to reduce perceived latency. With agents, the cost of pre-fetching grows fast. An agent running a multi-step investigation may issue dozens of queries before finding a useful signal. If each one fetches more than needed, the waste multiplies across the whole session. And because agent workflows branch, one over-broad query often becomes the template for dozens of follow-up queries.

For dashboards, WYSIWYF means only querying panels visible in the viewport. For agents, it means translating intent to the narrowest possible SQL:

Over-fetching:

Agent asks: "Total spend by region, last week"

Query: SELECT region, date, product, channel, SUM(spend)

FROM fact_events GROUP BY ALL

→ Agent filters in memory. 500M rows scanned.

Lazy loading:

SELECT region, SUM(spend_usd)

FROM fact_events

WHERE date BETWEEN '2026-04-07' AND '2026-04-13'

GROUP BY region ORDER BY 2 DESC LIMIT 50

→ 2M rows scanned.In columnar analytical engines like ClickHouse or DuckDB, narrowing the scan before execution is the most reliable way to keep query cost predictable at agent scale.

The same logic applies across all Rill dashboards and components. For example, a pivot table with 10,000 rows doesn't need to fetch all 10,000 rows up front — it needs the first screenful. Components are virtualized and render what's visible, load more as the user scrolls through. Any panel outside the current viewport doesn't trigger a query at all. With dozens of dashboard components that might otherwise all fire simultaneously, deferring off-screen queries keeps database load from multiplying on every page load.

Keep result sets small, as agents do best with limited context

WYSIWYF ensures queries are narrow. But even a well-formed query can return more rows than an agent can usefully process. The semantic layer must enforce an upper bound on result set size.

Every row in the result becomes tokens in the agent's context window — a 10,000-row result set doesn't give the agent 10x more signal than 1,000 rows, it just consumes 10x more tokens and increases inference cost. The database doesn't know the result will be truncated downstream: a SELECT * FROM events with no LIMIT scans every row before the application layer can trim it, paying the full scan cost regardless.

Agent asks: "show me all events"

Without enforcement:

SELECT * FROM events

→ 200M rows scanned, context window exhausted

With semantic layer enforcement:

SELECT * FROM events ORDER BY spend_usd DESC LIMIT 500

→ 500 rows scanned, top campaigns by spend returned

The agent can paginate and further ask for more rows if requiredIn Rill, all agent-facing queries have a configurable row cap enforced before execution. When a query has no explicit limit, the semantic layer injects one — applying ORDER BY on the most relevant measure based on the context so the agent receives the most useful rows, not a random slice of whatever the engine returned first.

The default cap should stay small. For most analytical questions, Top 200 rows is enough to reason correctly. An agent that genuinely needs more is usually asking the wrong question — the answer is a better aggregation query, not a larger result set. And a LIMIT without a meaningful ORDER BY is usually not enough: it reduces result size, but it doesn’t guarantee usefulness. The semantic layer has to define what “top” means.

Enforce filters to limit full table scans

Most engines support predicate pushdown at the SQL level: a WHERE clause gets evaluated against partition metadata or column statistics before any data is read. Rill’s metrics layer ensures required filters are always present and expressed in a form the engine can push down.

The most common failure mode in practice: queries that omit a filter on the partition key requiring a full table scan, regardless of what other filters exist. The semantic layer can prevent this directly. If a table is partitioned on event_date, most queries against it should carry a time filter.

When a required filter is absent, the semantic layer can either reject the query with an informative error, inject a safe default (e.g. the last 30 days for a time filter), or route to a pre-aggregated table that doesn't carry the same partitioning constraints. Whichever path, the result is the same: no query reaches the engine without the filters needed for an efficient execution.

This prevents agents to issue an unbounded query and get in a retry loop after a timeout that would lead to increase compute costs significantly before anyone notices.

Intelligent query routing: using pre-aggregated datasets effectively

Pre-aggregated tables only help if your semantic layer is smart enough to use them effectively. Consider a typical product analytics stack: raw event data arrives continuously from an application. A materialized view maintains hourly page-level aggregates in near real-time. A daily batch job produces feature-level summaries. A monthly job creates a compact table for long-lookback queries. Each tier strips dimensions that aren’t needed at that granularity, compressing millions of rows into thousands:

Neither your dashboard nor your AI agent know this topology. Without intelligent routing, every query hits the base table. An agent asking “what were total events by feature last quarter?” scans hundreds of millions of raw events when the monthly summary can answer that by scanning just 20K rows.

For dashboards, poor routing means slow load times. For agents, the failure modes are harder to see: a 30-second timeout triggers a silent retry (generating more load) or returns an error.

In Rill, you declare the rollup topology alongside your metrics definition:

type: metrics_view

model: events_raw

timeseries: event_ts

rollups:

- model: events_hourly_page

time_grain: hour

- model: events_daily_feature

time_grain: day

- model: events_monthly_summary

time_grain: month

# ...Metric and dimension definitions....Before routing, Rill issues watermark queries against each rollup to see what time range it actually covers. If an Airflow job ran but landed data only through two days ago, the watermark reveals the true boundary. Watermarks are cached with a configurable TTL (default: 5 minutes).

Each rollup then passes a staged eligibility check:

Overly aggressive routing can return stale or incorrect results if grain compatibility and freshness aren’t validated. The semantic layer has to optimize for cost without breaking correctness.

Query planning and validation

While there are many small query optimizations done in Rill’s semantic layer. Three rewrites are worth discussing:

- Two-phase comparisons. Period-over-period comparisons across high-cardinality dimensions are expensive. Without a rewrite, a

FULL OUTER JOINbetween base and comparison periods produces a cardinality explosion for dimensions present in one period but not the other. - Rill’s approach is to query the base period first, extract which dimension values, then filter the comparison period to those values. For a typical period over period comparison, this rewrite changes a 5-second query to a 200ms query in our testing — a 25x performance gain.

- Time range binding: Resolve "last 7 days" to concrete timestamps against data watermarks before generating SQL. This ensures filters align with how data is physically partitioned, enabling pruning at the storage layer regardless of the engine.

- Full table scan prevention: Some queries are structurally guaranteed to scan every row — no partition filter, no useful index, an unbounded GROUP BY on a raw event table. For dashboards, these surface as slow queries that users complain about. For agents, they show up as timeouts that trigger retries, each of which also scans the full table.

Text-to-SQL generation still produces unbounded queries against large tables, as the BIRD benchmark indicates. A validator that catches those before execution is cheaper than a query timeout.

The semantic layer is the right place to catch these before they reach the engine. A query validator checks for patterns that reliably produce full scans and can reject the query with an informative error, not a generic timeout. The informative error helps the agent rethink its approach and ask a better question.

Aggressive cancellation: stop paying for abandoned work

An interactive dashboard generates hundreds of in-flight queries simultaneously. Change a filter and all of them become worthless. Agent workloads are worse: an investigation is a branching tree where entire inquiry paths get abandoned the moment a better signal appears. In our experience, 10–20% of in-flight agent queries are abandoned mid-execution as the agent pivots.

Cancellation needs to reach the engine and not stop at the application layer while the database finishes its scan. If cancellation doesn’t reach the engine, it isn’t cancellation — it’s just hiding the result.

In Rill's implementation, the chain runs end-to-end through application context system down to the engine interrupt ( e.g. ClickHouse's KILL QUERY, DuckDB's query interrupt API).

Query laning: giving priority to interactive workloads

Not all queries are equal. A fraud detection agent investigating an active breach needs a different answer time than a scheduled weekly export. If your infrastructure treats them equally, a batch job that arrives at the wrong moment starves out the queries users are actively waiting on.

Without efficient query laning and prioritization, the system can look healthy at the infrastructure level while feeling broken to whoever is waiting on a result.

Rill implements this with three prioritized query lanes.

- Metadata queries get a dedicated fast lane, these are the fastest queries to answer as the result is almost always cached.

- OLAP queries compete within a priority-ordered heap: higher-priority queries jump ahead of lower-priority ones even if those arrived first.

- Long-running operations — exports, scheduled reports, batch agent jobs — are gated behind the lowest priority lane, so they can never fully block the high priority lanes no matter how many arrive at once.

Access control with O(1) checks at any scale

Security rarely comes up in performance and efficiency discussions, but for multi-tenant analytics — especially embedded analytics where different organizations see isolated data — access control can quietly become the dominant query cost if it's poorly designed.

Storing user permissions in a lookup table and joining at query time turns a 100ms analytical query into a 10-second query against a billion-row table. The overhead is proportional to data volume, which is exactly backwards.

At Rill we encode security rules as SQL predicates computed once per request and inject them directly into the WHERE clause. The cost is then constant O(1) regardless of table size.

When the data model partitions or sorts by the security dimension, the injected predicate eliminates entire partitions before a single row is read. Predicate injection works best when the physical layout cooperates — which is one reason semantic-layer and data-model design have to be developed together.

Conclusion: Agentic analytics demands new architectures

To summarize, building for agentic analytics requires a shift in how we think about architectures:

- Agent-driven workloads are fundamentally different — they amplify query volume and make inefficiencies exponential, not linear. Without guardrails, agents will repeatedly generate inefficient queries, driving up cost and latency.

- Performance is a full-stack concern, but the semantic layer is the only layer that understands both intent and physical data layout.

- A disciplined semantic layer enforces efficiency through techniques like lazy loading, result limits, intelligent routing, and query rewrites.

Ultimately, the semantic layer has the highest leverage for balancing correctness, cost, and speed at scale. Get this layer right, and everything below it becomes more efficient by design. Get it wrong, and no amount of downstream optimizations can compensate.

Ready for faster dashboards?

Try for free today.

.svg)